After 3 days of hectic efforts in installing, error fixing and configuration changes, I managed to run Hadoop 3.x.x in Windows 10 environment with Java 12 being installed. It was a bunch of errors that made me delay in running it successfully, which I finally managed to go through each line via console and resolve it. Here I would like to discuss those errors in detail rather than steps to install it, which is readily available in internet.

Follow the below link to install & Configure Hadoop3.x in Windows: http://toppertips.com/hadoop-3-0-installation-on-windows/ . Just follow all the configuration steps in it as prescribed

Windows binaries for Hadoop versions. These are built directly from the same git commit used to create the official ASF releases; they are checked out and built on a windows VM which is dedicated purely to testing Hadoop/YARN apps on Windows. Choose the same version as the package type you choose for the Spark.tgz file you chose in section 2 “Spark: Download and Install” (in my case: hadoop-2.7.1). May 31, 2019 Download winutils.exe from the following link. This particular link will redirect you to GitHub and your winutils.exe must download from this. Once your WinUtils.exe is downloaded, try to set your Hadoop Home by editing your Hadoop environmental variables. Hadoop.dll hadoop.exp hadoop.lib hadoop.pdb libwinutils.lib winutils.exe winutils.pdb If you see upper dll and exe. You are ready to run Hadoop on Windows now! Set up Hadoop environment in Windows I got Hadoop build successfully with 'mvn compile', but still got test failure when using commenad 'mvn package'. Winutils.exe hadoop.dll and hdfs.dll binaries for hadoop windows - cdarlint/winutils.

Main rules you need to follow is, whenever you step into an issue hadoop is not running properly, need to give utmost attention to every console window you have (2 console windows when you run start-dfs.cmd & 2 console windows you get while running start-yarn.cmd)

Winutils Hadoop 2.6

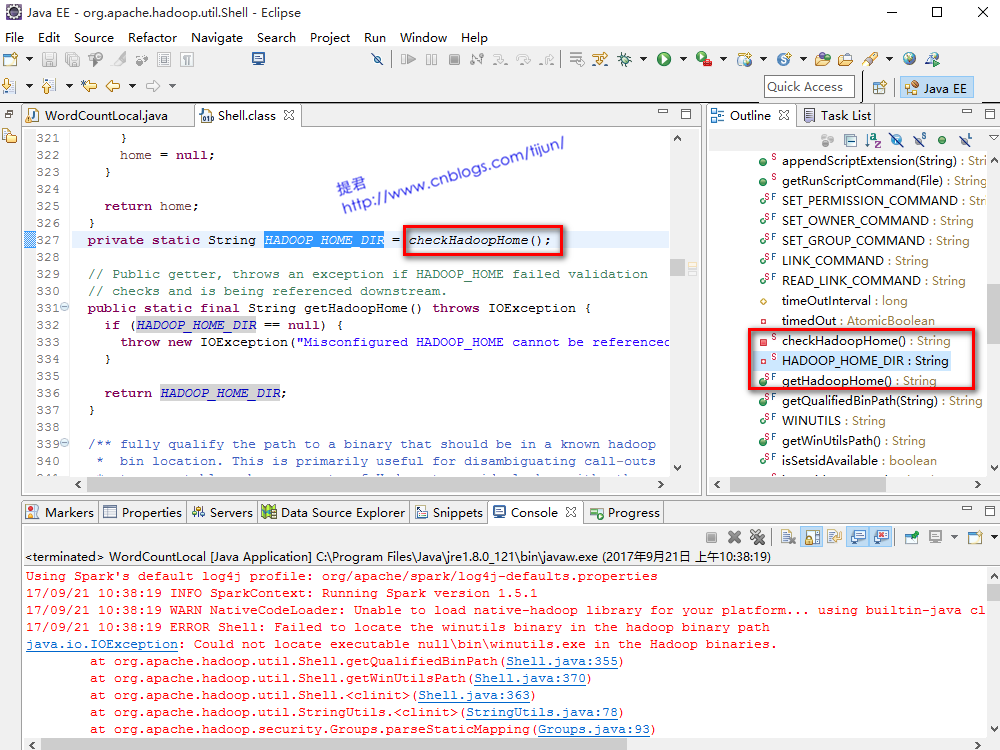

ERROR#1] When the console shows NativeIOLibraries are not loaded (eg:

), it means hadoop.dll and/or winutils.exe which is needed for hadoop 3.x onwards (for windows platform) is not in BIN directory of hadoop. You can just download the entire BIN directory needed for Hadoop 3.1.x from the gitlab link below : https://github.com/s911415/apache-hadoop-3.1.0-winutils . Replace it in your Hadoop directry and execute all commands in following order

stop-dfs.cmd

stop-yarn.cmd

hdfs namenode -format

hdfs datanode -format

start-dfs.cmd

start-yarn.cmd

ERROR#2] If error as like “ERROR namenode.NameNode: Failed to start namenode. Software tanaka future hd. java.lang.IllegalArgumentException: No class configured for C” shows in console, just go into hadoop-3.1.2etchadoop path, open the hdfs-site.xml and make sure the path is without the root drive (Example below)

Make sure its forward slash itself being used to separate path parameters. If used backward slash, the error console will show you Path not found exception.

ERROR#3] After the hadoop portal is up and running (http://localhost:9870/ ), when you go to “Browse file system” menu, you may step upon the error message as : ” Failed to retrieve data from /webhdfs/v1/?op=LISTSTATUS: Server Error “

This is because the javax.activation component is removed from Java 11 onward and you are using some version >= Java 11. (Look closely the console and you can see the similar error message there also). Go to https://jar-download.com/?search_box=javax.activation and download the activation jar file. Paste it into the hadoop directory as “<hadoop root directory>sharehadoopcommon”. Close all Hadoop consoles and execute the commands in the order as I quoted above . The issue resolved !

ERROR#4] After fixing above error, I stumbled on a permission error while trying to upload or create a directory in Hadoop file system as ” Permission denied: user=dr.who, access=WRITE, inode=”/”:Binukumar.S:supergroup:drwxr-xr-x ” This is simply because the user you are logged in to machine has no write permission by default. For quick results you just go into hdfs-site.xml (<hadoop root>etchadoop) and add a property tag as below: Lord murugan mantra for pregnancy.

That will bypass the permission for testing/staging. Not recommended for production though

ERROR#5] Even though folders can be got created in file system, when you start uploading files, we again get disappointed with a message as ” Couldn’t find datanode to write file. Forbidden “. This is because the datanode has not been created properly or not at all created. Why because its not created is, you may have formatted namenode in between, but forgot to format datanode and so the clusterID’s got mismatch.

Again if you look at the console closely (got while running start-dfs.cmd), you can see the message related to this as “Failed to add storage directory [DISK]file:/C:/hadoop-3.1.2/data/datanode

java.io.IOException: Incompatible clusterIDs“. Here is the solution for this… Just go to the datanode folder you created at the time of installation, delete the folder and files in it manually. Run all the commands again and you are good to go. Now you can upload files to HDFS successfully

java.io.IOException: Incompatible clusterIDs“. Here is the solution for this… Just go to the datanode folder you created at the time of installation, delete the folder and files in it manually. Run all the commands again and you are good to go. Now you can upload files to HDFS successfully

- Status:Closed

- Resolution: Not A Problem

- Fix Version/s: None

- Labels:

C:UsersWEI>pyspark

Python 3.5.6 |Anaconda custom (64-bit)| (default, Aug 26 2018, 16:05:27) [MSC v.

1900 64 bit (AMD64)] on win32

Type 'help', 'copyright', 'credits' or 'license' for more information.

2018-09-14 21:12:39 ERROR Shell:397 - Failed to locate the winutils binary in th

e hadoop binary path

java.io.IOException: Could not locate executable nullbinwinutils.exe in the Ha

doop binaries.

at org.apache.hadoop.util.Shell.getQualifiedBinPath(Shell.java:379)

at org.apache.hadoop.util.Shell.getWinUtilsPath(Shell.java:394)

at org.apache.hadoop.util.Shell.<clinit>(Shell.java:387)

at org.apache.hadoop.util.StringUtils.<clinit>(StringUtils.java:80)

at org.apache.hadoop.security.SecurityUtil.getAuthenticationMethod(Secur

ityUtil.java:611)

at org.apache.hadoop.security.UserGroupInformation.initialize(UserGroupI

nformation.java:273)

at org.apache.hadoop.security.UserGroupInformation.ensureInitialized(Use

rGroupInformation.java:261)

at org.apache.hadoop.security.UserGroupInformation.loginUserFromSubject(

UserGroupInformation.java:791)

at org.apache.hadoop.security.UserGroupInformation.getLoginUser(UserGrou

pInformation.java:761)

at org.apache.hadoop.security.UserGroupInformation.getCurrentUser(UserGr

oupInformation.java:634)

at org.apache.spark.util.Utils$$anonfun$getCurrentUserName$1.apply(Utils

.scala:2467)

at org.apache.spark.util.Utils$$anonfun$getCurrentUserName$1.apply(Utils

.scala:2467)

at scala.Option.getOrElse(Option.scala:121)

at org.apache.spark.util.Utils$.getCurrentUserName(Utils.scala:2467)

at org.apache.spark.SecurityManager.<init>(SecurityManager.scala:220)

at org.apache.spark.deploy.SparkSubmit$.secMgr$lzycompute$1(SparkSubmit.

scala:408)

at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSub

mit$$secMgr$1(SparkSubmit.scala:408)

at org.apache.spark.deploy.SparkSubmit$$anonfun$doPrepareSubmitEnvironme

nt$7.apply(SparkSubmit.scala:416)

at org.apache.spark.deploy.SparkSubmit$$anonfun$doPrepareSubmitEnvironme

nt$7.apply(SparkSubmit.scala:416)

at scala.Option.map(Option.scala:146)

at org.apache.spark.deploy.SparkSubmit$.doPrepareSubmitEnvironment(Spark

Submit.scala:415)

at org.apache.spark.deploy.SparkSubmit$.prepareSubmitEnvironment(SparkSu

bmit.scala:250)

at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:171)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:137)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

2018-09-14 21:12:39 WARN NativeCodeLoader:62 - Unable to load native-hadoop lib

rary for your platform.. using builtin-java classes where applicable

Setting default log level to 'WARN'.

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLeve

l(newLevel).

Welcome to

____ __

/ _/_ ___ ____/ /_

/ _ / _ `/ __/ '/

/__ / ._/_,// //_ version 2.3.1

/_/

Python 3.5.6 |Anaconda custom (64-bit)| (default, Aug 26 2018, 16:05:27) [MSC v.

1900 64 bit (AMD64)] on win32

Type 'help', 'copyright', 'credits' or 'license' for more information.

2018-09-14 21:12:39 ERROR Shell:397 - Failed to locate the winutils binary in th

e hadoop binary path

java.io.IOException: Could not locate executable nullbinwinutils.exe in the Ha

doop binaries.

at org.apache.hadoop.util.Shell.getQualifiedBinPath(Shell.java:379)

at org.apache.hadoop.util.Shell.getWinUtilsPath(Shell.java:394)

at org.apache.hadoop.util.Shell.<clinit>(Shell.java:387)

at org.apache.hadoop.util.StringUtils.<clinit>(StringUtils.java:80)

at org.apache.hadoop.security.SecurityUtil.getAuthenticationMethod(Secur

ityUtil.java:611)

at org.apache.hadoop.security.UserGroupInformation.initialize(UserGroupI

nformation.java:273)

at org.apache.hadoop.security.UserGroupInformation.ensureInitialized(Use

rGroupInformation.java:261)

at org.apache.hadoop.security.UserGroupInformation.loginUserFromSubject(

UserGroupInformation.java:791)

at org.apache.hadoop.security.UserGroupInformation.getLoginUser(UserGrou

pInformation.java:761)

at org.apache.hadoop.security.UserGroupInformation.getCurrentUser(UserGr

oupInformation.java:634)

at org.apache.spark.util.Utils$$anonfun$getCurrentUserName$1.apply(Utils

.scala:2467)

at org.apache.spark.util.Utils$$anonfun$getCurrentUserName$1.apply(Utils

.scala:2467)

at scala.Option.getOrElse(Option.scala:121)

at org.apache.spark.util.Utils$.getCurrentUserName(Utils.scala:2467)

at org.apache.spark.SecurityManager.<init>(SecurityManager.scala:220)

at org.apache.spark.deploy.SparkSubmit$.secMgr$lzycompute$1(SparkSubmit.

scala:408)

at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSub

mit$$secMgr$1(SparkSubmit.scala:408)

at org.apache.spark.deploy.SparkSubmit$$anonfun$doPrepareSubmitEnvironme

nt$7.apply(SparkSubmit.scala:416)

at org.apache.spark.deploy.SparkSubmit$$anonfun$doPrepareSubmitEnvironme

nt$7.apply(SparkSubmit.scala:416)

at scala.Option.map(Option.scala:146)

at org.apache.spark.deploy.SparkSubmit$.doPrepareSubmitEnvironment(Spark

Submit.scala:415)

at org.apache.spark.deploy.SparkSubmit$.prepareSubmitEnvironment(SparkSu

bmit.scala:250)

at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:171)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:137)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

2018-09-14 21:12:39 WARN NativeCodeLoader:62 - Unable to load native-hadoop lib

rary for your platform.. using builtin-java classes where applicable

Setting default log level to 'WARN'.

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLeve

l(newLevel).

Welcome to

____ __

/ _/_ ___ ____/ /_

/ _ / _ `/ __/ '/

/__ / ._/_,// //_ version 2.3.1

/_/

Using Python version 3.5.6 (default, Aug 26 2018 16:05:27)

SparkSession available as 'spark'.

>>>

SparkSession available as 'spark'.

>>>

- Assignee:

- Unassigned

- Reporter:

- WEI PENG

- Votes:

- 0Vote for this issue

- Watchers:

- 3Start watching this issue

Winutils.exe Hadoop Download

- Created:

- Updated:

- Resolved: